Introduction

The field of artificial intelligence (AI), specifically natural language processing (NLP), has seen phenomenal growth and innovation over the years. Among the myriad of concepts and methodologies that underpin NLP, ‘prompting’ holds a special place, particularly in the realm of Large Language Models (LLMs). One of the more advanced and potent prompting strategies is known as ‘Chain of Thought Prompting.’ This article presents a comprehensive overview of this fascinating aspect of prompt engineering.

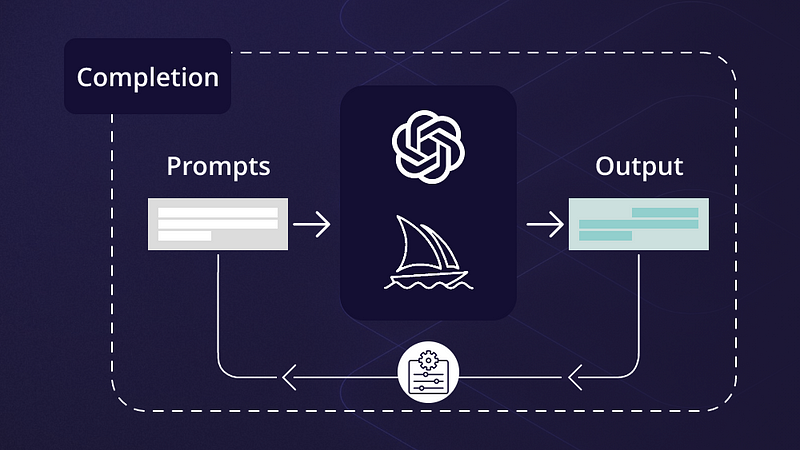

The Foundation: Understanding Prompting

Prompting is essentially the process of providing an input to an LLM like GPT-3 or GPT-4 in such a way that it generates a desired output. This input, known as the ‘prompt,’ serves as a directive for the model, guiding it in generating its response. The art and science of effectively constructing these prompts for LLMs is known as ‘Prompt Engineering.’

The Evolution: Chain of Thought Prompting

Chain of Thought Prompting (CoTP) is an advanced form of prompt engineering that entails the creation of a sequence of prompts designed to guide the LLM through a series of thoughts or ideas. Instead of using a single static prompt, CoTP employs multiple dynamic prompts that are constructed and adjusted based on the responses generated by the LLM.

This approach can be visualized as a conversation, where each prompt-response pair builds upon the previous pair. The model is not just responding to a single prompt, but to a chain of prompts and responses, much like how a human conversation evolves.

The Process: Implementing Chain of Thought Prompting

The implementation of CoTP involves a series of well-defined steps.

Initial Prompt: The process starts with an initial prompt that serves as a broad guide for the LLM. This prompt could be a question, a statement, or a scenario, depending on the desired outcome.

Response Generation: The LLM generates a response based on the initial prompt.

Subsequent Prompting: Based on the response generated by the LLM, a subsequent prompt is created. This prompt is designed to guide the LLM further along the desired line of thought.

Iterative Process: Steps 2 and 3 are repeated until the desired outcome is achieved. Each subsequent prompt is designed based on the responses generated by the LLM, leading to a dynamic, evolving conversation.

The Significance: Why Chain of Thought Prompting?

CoTP holds several advantages over traditional single-prompt strategies.

Contextual Understanding: It allows the LLM to build and maintain a more nuanced understanding of the context. Each subsequent prompt-response pair further refines this understanding, leading to more relevant and accurate outputs.

Dynamic Conversation: CoTP facilitates a dynamic conversation with the LLM. This conversational approach can make the interaction with the LLM more natural and engaging, enhancing user experience.

Complex Problem Solving: It enables the solving of more complex problems that require multi-step reasoning. The LLM can be guided through a series of thoughts or steps to reach a conclusion, much like a human conversation or brainstorming session.

Conclusion: Mastering Chain of Thought Prompting

Mastering CoTP requires a thorough understanding of LLMs and the specific task or domain at hand. It also requires creativity in crafting dynamic prompts and a keen eye for evaluating and interpreting the responses generated by the LLM. As we continue to explore and refine prompting strategies like CoTP, the potential applications and benefits of LLMs will continue to expand, heralding exciting new possibilities in the field of AI and NLP.

Find more … …

Prompting Concepts: A Comprehensive Guide to Chain of Thought Prompting

Prompt Engineering: A Structured Approach to Demystifying AI Prompting Techniques