Introduction to Data Reconciliation

In today’s data-driven business landscape, maintaining data accuracy and consistency is of utmost importance. Data reconciliation is a crucial process that ensures data integrity and reliability across various systems and databases. In this comprehensive guide, we will delve into the world of data reconciliation, exploring its purpose, techniques, tools, and benefits.

What is Data Reconciliation?

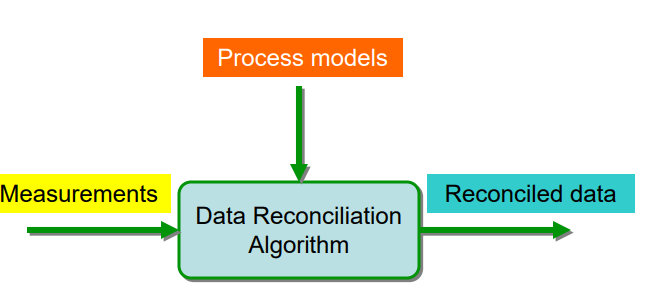

Data reconciliation is the process of comparing and validating data from different sources or systems to ensure consistency, accuracy, and completeness. The process involves identifying discrepancies, inconsistencies, and errors in the data, and taking corrective actions to resolve them. Data reconciliation plays a vital role in maintaining data integrity and supporting informed decision-making in organizations.

Importance of Data Reconciliation

Data reconciliation is essential for several reasons, including:

Data Accuracy: Ensuring data accuracy is critical for organizations to make informed decisions and optimize business processes. Data reconciliation helps identify and correct inaccuracies, leading to improved decision-making and overall business performance.

Data Consistency: With data coming from multiple sources, maintaining consistency can be challenging. Data reconciliation helps organizations maintain consistency across different systems and databases, ensuring a unified view of the data.

Regulatory Compliance: Organizations often need to comply with various regulations and standards that mandate data accuracy and integrity. Data reconciliation helps organizations meet these requirements by ensuring that their data is reliable and accurate.

Risk Management: By identifying and resolving data discrepancies and errors, data reconciliation helps organizations mitigate risks associated with inaccurate or inconsistent data, such as financial losses, operational inefficiencies, and reputational damage.

Techniques for Data Reconciliation

There are several techniques for data reconciliation, including:

Manual Reconciliation: Manual reconciliation involves comparing data from different sources or systems manually, typically using spreadsheets or other tools. This method can be time-consuming and prone to errors, making it less suitable for large-scale data reconciliation tasks.

Automated Reconciliation: Automated reconciliation involves using software tools or algorithms to compare and validate data automatically. This method is more efficient and accurate than manual reconciliation, particularly when dealing with large volumes of data.

Rule-Based Reconciliation: Rule-based reconciliation involves defining specific rules or criteria that must be met for the data to be considered consistent. This approach can help organizations maintain data integrity by ensuring that data conforms to predefined standards or guidelines.

Statistical Reconciliation: Statistical reconciliation involves using statistical techniques, such as regression analysis or machine learning algorithms, to identify and resolve data discrepancies. This method can be particularly useful for detecting complex or subtle inconsistencies in the data.

Tools for Data Reconciliation

There are various tools available for data reconciliation, ranging from manual methods like spreadsheets to specialized software solutions. Some popular data reconciliation tools include:

Microsoft Excel: Microsoft Excel is a widely used spreadsheet application that can be used for manual data reconciliation tasks. Excel’s built-in functions and features, such as VLOOKUP and conditional formatting, can help users compare and validate data from different sources.

IBM InfoSphere Information Analyzer: IBM InfoSphere Information Analyzer is a comprehensive data quality solution that includes data reconciliation capabilities. It enables users to analyze, validate, and reconcile data from various sources, ensuring data accuracy and consistency.

SAP Data Services: SAP Data Services is a data integration and data quality platform that offers data reconciliation functionality. It allows users to compare and validate data from different sources, identify discrepancies, and take corrective actions to resolve them.

Informatica Data Quality: Informatica Data Quality is a powerful data quality management solution that includes data reconciliation features. It enables organizations to maintain data accuracy and consistency by comparing and validating data from multiple sources, detecting discrepancies, and implementing corrective actions.

Talend Data Quality: Talend Data Quality is a data integration and data quality platform that provides data reconciliation capabilities. It allows users to ensure data accuracy and consistency by comparing data from various sources, identifying inconsistencies, and resolving them.

Oracle Data Integrator (ODI): Oracle Data Integrator is a comprehensive data integration solution that includes data reconciliation features. It enables users to maintain data accuracy and consistency across different systems and databases by comparing and validating data, identifying discrepancies, and taking corrective actions.

Benefits of Data Reconciliation

Implementing data reconciliation processes in organizations offers numerous benefits, including:

Improved Data Quality: Data reconciliation helps organizations ensure data accuracy, consistency, and completeness, leading to improved data quality and reliability.

Informed Decision-Making: Accurate and consistent data is essential for informed decision-making in organizations. Data reconciliation supports this by ensuring that decision-makers have access to reliable, up-to-date data.

Efficient Business Processes: Data discrepancies and errors can lead to inefficient business processes, wasted resources, and financial losses. Data reconciliation helps organizations avoid these issues by identifying and resolving data inconsistencies.

Regulatory Compliance: By ensuring data accuracy and integrity, data reconciliation helps organizations meet regulatory requirements and avoid potential penalties or sanctions.

Risk Mitigation: Data reconciliation enables organizations to identify and address data discrepancies and errors, reducing the risks associated with inaccurate or inconsistent data.

Increased Customer Satisfaction: Accurate and consistent data is essential for providing high-quality customer service and building trust with customers. Data reconciliation helps organizations achieve this by ensuring that their customer data is reliable and up-to-date.

Summary

Data reconciliation is a critical process for maintaining data accuracy and consistency across various systems and databases. By implementing effective data reconciliation techniques and using appropriate tools, organizations can improve data quality, support informed decision-making, and optimize business processes. As the volume and complexity of data continue to grow, the importance of data reconciliation in ensuring data integrity and reliability cannot be overstated. By understanding the purpose, techniques, tools, and benefits of data reconciliation, organizations can better manage their data resources and make more informed decisions in today’s data-driven business environment.