Introduction

Prompt engineering is a critical aspect of working with natural language processing (NLP) models, such as GPT-3 or GPT-4, which involves crafting effective prompts to elicit desired responses from these models. As AI language models continue to evolve and become more powerful, mastering prompt engineering has become increasingly important for developers and researchers alike. In this comprehensive guide, we will delve into the concept of prompt engineering, explore its significance, and discuss the best practice guidelines for achieving optimal results.

1. Understanding Prompt Engineering

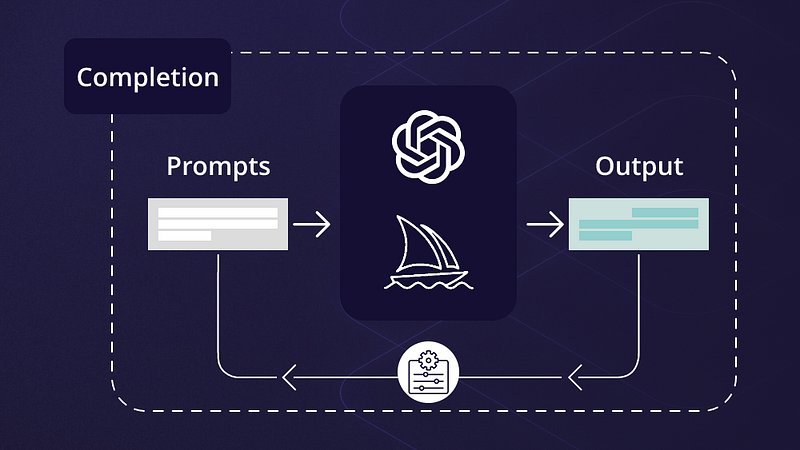

To comprehend prompt engineering, it is essential first to grasp the concept of prompts. In the context of AI language models, a prompt is an input query or text that you provide to the model to generate a specific response or output. Prompt engineering, therefore, refers to the process of designing, refining, and experimenting with different prompts to maximize the effectiveness and accuracy of the model’s output.

2. Why Prompt Engineering Matters

Prompt engineering is crucial for several reasons:

– Improved model performance: Effective prompts can significantly enhance the performance and usefulness of AI language models, resulting in more accurate and contextually relevant outputs.

– Resource optimization: Since large-scale language models can be computationally expensive, prompt engineering helps optimize resources by minimizing the need for repeated queries or excessive fine-tuning.

– Enhanced user experience: Skillful prompt engineering ensures that users receive meaningful and actionable information from AI language models, ultimately improving the overall user experience.

3. Best Practice Guidelines for Prompt Engineering

To excel in prompt engineering, consider the following best practices and techniques:

a. Start with a clear objective

Before designing a prompt, clearly define your objective or the specific outcome you want to achieve. Understanding your goal will help you craft more targeted and effective prompts.

b. Make your prompts explicit

AI language models perform better with explicit prompts that provide clear instructions. Avoid vague or ambiguous language, and specify the format or structure you expect in the model’s response.

c. Experiment with various approaches

There is no one-size-fits-all solution for prompt engineering. Experiment with different approaches, such as altering the prompt’s phrasing, structure, or context, to find the optimal prompt for your objective.

d. Leverage step-by-step instructions

When dealing with complex tasks or multi-step processes, consider breaking down your prompt into step-by-step instructions to guide the AI model more effectively.

e. Use examples to guide the model

Including examples within your prompt can help clarify your expectations and guide the model toward the desired output. However, be cautious not to overfit the model by providing too many examples or making them overly specific.

f. Control verbosity and response length

If you require a concise response or wish to limit the output length, incorporate specific instructions in your prompt. For instance, you could ask the model to provide a summary in three sentences or request a brief explanation.

g. Iterate and refine your prompts

Prompt engineering is an iterative process. Continuously refine and optimize your prompts based on the model’s performance and feedback to achieve the best possible results.

4. Techniques for Advanced Prompt Engineering

In addition to the best practice guidelines, consider exploring the following advanced techniques for prompt engineering:

a. Temperature and sampling parameters

Manipulating the temperature and sampling parameters can help control the randomness and creativity of the model’s output. Lower temperature values yield more focused and deterministic responses, while higher values produce more diverse and creative results.

b. Utilize external knowledge sources

For tasks that require specific or factual information, consider using external knowledge sources, such as databases or knowledge graphs, alongside the AI model to enhance the accuracy and relevancy of the output.

c. Experiment with reinforcement learning

Reinforcement learning techniques, such as Proximal Policy Optimization (PPO) or REINFORCE, can be applied to prompt engineering to fine-tune AI language models based on custom reward functions. This approach enables you to optimize the model’s output according to specific criteria or desired behaviors.

d. Incorporate user feedback

Collecting and incorporating user feedback into your prompt engineering process is invaluable for improving the model’s performance and user experience. Analyze feedback to identify areas of weakness, and adjust your prompts accordingly to enhance the quality of the output.

e. Employ prompt chaining

Prompt chaining involves breaking down a complex task into smaller, more manageable prompts and feeding the model’s output from one prompt into the next. This technique can help tackle intricate problems or multi-step processes more effectively.

f. Test across various model versions

When working with AI language models, it’s essential to test your prompts across multiple model versions to ensure consistent performance and output quality. Different models may respond differently to the same prompt, so it’s crucial to evaluate and refine your prompts accordingly.

5. Ethics and Considerations in Prompt Engineering

As with any AI application, it’s essential to consider the ethical implications of prompt engineering:

– Avoid biased or discriminatory prompts: Ensure that your prompts do not perpetuate biases or encourage discriminatory behavior from the AI model.

– Respect user privacy: When using user-generated content or data in your prompts, ensure that you have the necessary permissions and maintain user privacy.

– Limit harmful or malicious outputs: Craft your prompts to minimize the potential for AI-generated outputs that may be harmful, offensive, or malicious.

Summary

Prompt engineering is a vital skill for maximizing the effectiveness and usefulness of AI language models in various applications. By adhering to the best practice guidelines and techniques outlined in this guide, you can improve model performance, optimize resources, and enhance the user experience. As you delve deeper into prompt engineering, remember to continuously iterate and refine your prompts, experiment with advanced techniques, and consider the ethical implications of your work. With dedication and perseverance, you can master prompt engineering and unleash the full potential of AI language models for your projects and endeavors.

Find more … …

What is Artificial Intelligence (AI) and why is it important?

Tableau for Data Analyst – Using the Workspace Control Effectively