Introduction to Star Schema in Data Warehouse Modeling

The star schema is a widely used data warehouse modeling technique that offers simplicity, efficiency, and improved query performance in business intelligence (BI) and analytical applications. This comprehensive guide will explore the concepts, design principles, and advantages of the star schema in data warehouse modeling, providing a solid foundation for understanding and implementing this technique in various industries and use cases.

Star Schema: Core Concepts and Components

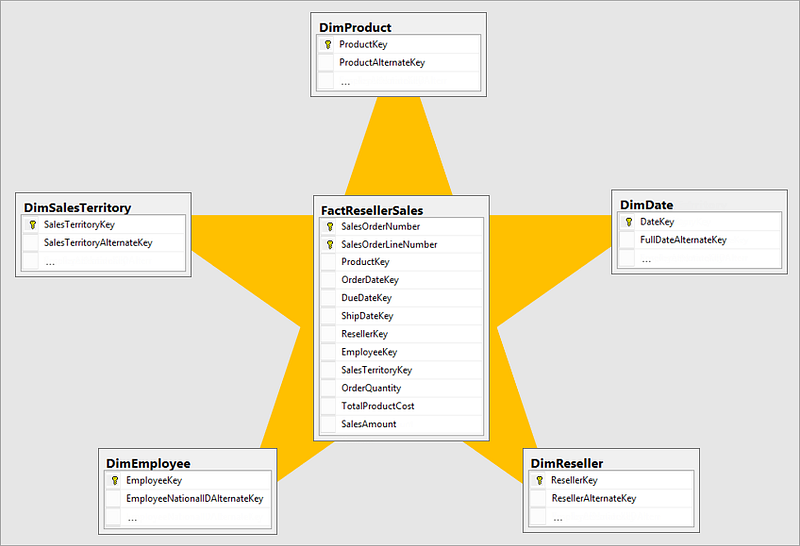

The star schema is a type of dimensional modeling technique that organizes data into a logical structure composed of a central fact table and one or more surrounding dimension tables. The fact table contains quantitative data, while the dimension tables store descriptive information. To better understand the star schema, it is essential to familiarize yourself with its key components:

Fact Table: The fact table is the central table in a star schema that stores the numerical data, such as sales, revenue, or profit. Fact tables contain fact records, which represent individual transactions or events, along with foreign keys that reference the associated dimension tables.

Dimension Table: Dimension tables are connected to the fact table via primary and foreign key relationships and store the descriptive information, such as customer details, product information, or date attributes. Dimension tables provide context for the facts and are used to filter, group, or categorize the data in various ways.

Primary and Foreign Keys: Primary keys are unique identifiers for each row in a table, while foreign keys are used to establish relationships between the fact table and dimension tables. In a star schema, the fact table contains foreign keys that reference the primary keys of the dimension tables, creating a “star” shape.

Design Principles for Star Schema in Data Warehouse Modeling

When designing a star schema for a data warehouse, consider the following key principles:

Denormalization: Star schema design often involves denormalizing the data, which means combining related tables to reduce the number of joins required in analytical queries. While denormalization can lead to data redundancy, it can also improve query performance and simplify the data structure.

Surrogate Keys: Use surrogate keys, which are system-generated, unique identifiers, for the primary keys in dimension tables. Surrogate keys can improve query performance and maintain data integrity, as they are not affected by changes in the natural keys (i.e., keys derived from the actual data).

Data Granularity: Determine the appropriate level of granularity for your fact table, which represents the lowest level of detail at which the data is stored. The granularity should be based on your organization’s specific reporting and analytical requirements.

Conformed Dimensions: Conformed dimensions are dimensions that are shared across multiple fact tables, ensuring consistency and enabling cross-functional analysis. Design your star schema with conformed dimensions to facilitate data integration and enable more comprehensive reporting and analysis.

Advantages of Star Schema in Data Warehouse Modeling

The star schema offers several advantages in the context of data warehouse modeling and business intelligence, including:

Simplicity: The star schema’s straightforward structure, with a central fact table and surrounding dimension tables, makes it easy to understand and navigate, even for non-technical users.

Query Performance: By reducing the number of joins required in analytical queries and using surrogate keys, the star schema can significantly improve query performance, enabling faster data retrieval and reporting.

Scalability: The star schema can easily accommodate growth in data volume and complexity, making it a scalable solution for organizations with evolving data warehousing and business intelligence needs.

Flexible Data Analysis: The star schema’s structure enables flexible data analysis, allowing users to filter, group, and aggregate data in various ways to support data-driven decision-making. This flexibility empowers users to explore and analyze data according to their specific needs and requirements.

Efficient Data Storage: Although denormalization can lead to data redundancy, it can also help optimize data storage and reduce the overall storage requirements for a data warehouse. By combining related tables and reducing the number of joins, the star schema can streamline the data structure and improve storage efficiency.

Easier Maintenance: The simplicity of the star schema makes it easier to maintain and update the data warehouse over time, ensuring data integrity and consistency across the organization.

Implementing Star Schema in Data Warehouse Modeling: Best Practices

When implementing a star schema in a data warehouse, consider the following best practices:

Define Business Requirements: Begin by identifying the specific business requirements and objectives of your data warehouse and BI applications. This will help guide the design of your star schema and ensure that it meets the needs of your organization.

Identify Relevant Facts and Dimensions: Determine the key facts and dimensions that are most relevant to your business requirements and will support the desired analytical capabilities.

Design Fact and Dimension Tables: Design your fact table with the appropriate granularity and use surrogate keys for the primary keys in your dimension tables. Ensure that your fact table contains foreign keys that reference the primary keys of the dimension tables, creating the star shape.

Incorporate Conformed Dimensions: Design your star schema with conformed dimensions to facilitate data integration and enable more comprehensive reporting and analysis across multiple fact tables.

Optimize Data Storage and Performance: Apply denormalization and surrogate keys to optimize data storage and improve query performance in your star schema.

Test and Validate the Schema: Thoroughly test and validate your star schema to ensure that it meets the business requirements and supports the desired analytical capabilities.

Monitor and Update the Schema: Regularly monitor the performance of your data warehouse and BI applications, and update the star schema as needed to accommodate changes in business requirements or data volume.

Conclusion

The star schema is a powerful data warehouse modeling technique that offers simplicity, efficiency, and improved query performance for business intelligence and analytical applications. By organizing data into a central fact table and surrounding dimension tables, the star schema enables flexible data analysis, efficient data storage, and easier maintenance, ultimately supporting data-driven decision-making in various industries and applications. By understanding the core concepts, design principles, and best practices of the star schema, organizations can successfully implement this approach and leverage its many benefits to drive better business outcomes.