Artificial intelligence (AI) and machine learning (ML) have seen significant advancements, leading to complex models that can make predictions with astonishing accuracy. However, these models often resemble a “black box,” making their decisions challenging to interpret. The quest for increased transparency and interpretability in machine learning has given rise to techniques such as RuleFit. This method combines the predictive power of machine learning models with the interpretability of rules. This article delves into the workings of RuleFit, its applications, advantages, and limitations, offering insights into this fascinating machine learning approach.

What is RuleFit?

RuleFit is a method for generating a model that combines rules and linear regression. It was developed by Jerome H. Friedman and Bogdan E. Popescu and is a way of creating interpretable models that can predict an outcome based on various features.

The RuleFit algorithm works by generating a set of rules from a dataset, then fitting these rules into a model using L1-regularized (Lasso) regression. Each rule in the RuleFit model can be thought of as a feature or variable. The model is fitted in such a way that it minimizes the sum of the residuals, akin to traditional regression models.

Rule Generation in RuleFit

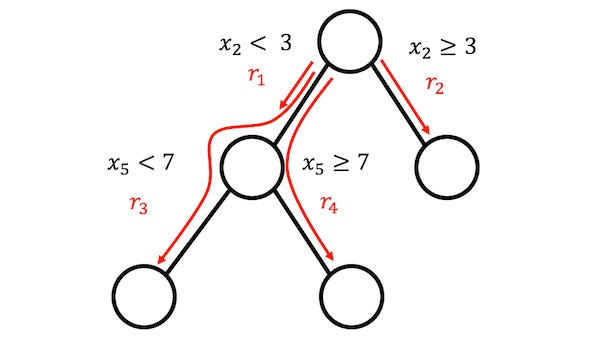

The first step in the RuleFit algorithm is the generation of rules. These rules are derived from the features in the dataset using decision trees. RuleFit uses gradient boosting to create an ensemble of decision trees, from which the individual decision paths are extracted. Each decision path from root to leaf in the decision tree represents a rule.

For example, in a dataset with features A and B, a decision tree might split first on feature A (e.g., A < 30), then on feature B (e.g., B > 20). This would create the rule: If A < 30 and B > 20, then predict a specific outcome.

Regression in RuleFit

Once the rules have been generated, the RuleFit model treats each rule as a binary variable in a dataset. If a particular instance (or row) in the dataset satisfies the conditions of the rule, it is marked as 1; otherwise, it is marked as 0.

Next, RuleFit fits a L1-regularized linear regression model to the data. L1 regularization (or Lasso) adds a penalty equal to the absolute value of the magnitude of coefficients, which helps to shrink some of the coefficients to zero. This way, the less important rules (or features) are removed, leading to a more parsimonious model.

Why RuleFit?

The major advantage of RuleFit is that it combines the prediction power of machine learning with the interpretability of rule-based systems. Here are some key benefits of RuleFit:

Interpretability: Unlike many machine learning models, a RuleFit model is easy to understand. It presents its decisions as a set of rules, which can be interpreted even by non-experts.

Performance: While RuleFit emphasizes interpretability, it does not significantly compromise on the model’s performance. It usually provides comparable results to more complex, black-box models.

Feature Importance: RuleFit provides insights into which features are most important in predicting the outcome. This can be useful in exploratory data analysis and understanding the underlying processes that the data represents.

Flexibility: RuleFit can handle both regression and classification tasks, making it a versatile tool in the machine learning toolkit.

Limitations of RuleFit

While RuleFit offers several advantages, it also has a few limitations:

Computationally Intensive: Generating rules from decision trees and fitting a Lasso regression model can be computationally intensive, especially for large datasets with many features.

Less Accurate for Complex Relationships: While RuleFit can capture non-linear relationships, it may not perform as well as other machine learning models when the relationship between features and the target variable is highly complex and non-linear.

Risk of Overfitting: Like other machine learning models, RuleFit is not immune to overfitting, especially when the number of rules is large. Regularization helps mitigate this risk, but it does not entirely eliminate it.

Conclusion

In the quest for more transparent and interpretable machine learning models, RuleFit represents a significant advance. By combining the power of rule-based systems and machine learning, RuleFit delivers models that are not only accurate but also interpretable. While not without limitations, RuleFit’s potential for unveiling the ‘why’ behind predictions makes it a worthy tool in the arsenal of every data scientist. As we move towards a future where accountability and transparency in AI become increasingly important, tools like RuleFit are likely to become even more prominent.

Find more … …

Excel to Python Example – How to count number of rows that meet certain criteria or business rules

Excel to Python Example – How to count rows if meet multiple criteria or business rules